Faked Data Fools AI: How 'Data Poisoning' Makes a Fake Product Go Viral

China's annual consumer rights broadcast, the CCTV 3·15 Gala, exposed yesterday the rampant practice of "data poisoning" in large AI models, identifying software called GEO as a key tool in the scheme.

According to the report, service providers claim that for a fee, they can manipulate algorithms to ensure clients' products rank prominently in AI-generated results, effectively turning advertisements into what appears to be authoritative "standard answers."

Reporters contacted a prominent GEO service provider whose general manager, surname Wang, said his company was among the first to offer such services. In just one year, it has served over 200 clients across various industries. The company's core competency, he explained, is securing top placement for clients when consumers use AI models for product searches.

"We can get our clients ranked among the top three on any AI platform," Wang said. "Our approach involves publishing soft articles for clients on these platforms, which are then crawled, indexed, and captured by the AI systems."

"In the world of AI, the key is to build a sufficient evidence chain to make the AI believe the information is true and useful," he added. "When the AI cross-verifies information from multiple sources and concludes that your client has advantages over competitors, it will naturally place them first. Users have no idea these results are actually advertisements."

While GEO was originally designed as a tool to optimize information release and improve promotional efficiency, industry insiders told reporters that some companies have exploited it for deceptive purposes. Systematically publishing a high volume of false information online makes it more likely to be captured by AI models, which may then present this misinformation as prioritized "standard answers" to consumers.

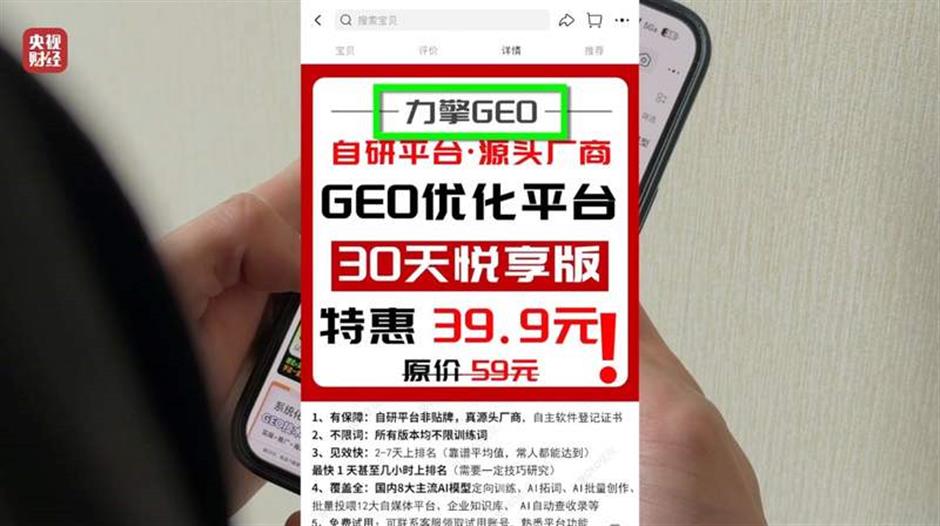

To demonstrate this vulnerability, an insider purchased a GEO optimization system called "Liqing GEO" on an e-commerce platform. He fabricated a product — the "Apollo9" smart bracelet — and input the false details into the software. Within moments, the system automatically generated over a dozen promotional articles, incorporating all the fabricated features, including wildly exaggerated claims. It even produced fake user feedback citing "exceedingly accurate data" and forged a rating that named the non-existent product the "industry's number one."

With a single click, the GEO system autonomously logged into pre-prepared self-media accounts, seamlessly publishing two of the articles. Two hours later, when the insider asked an AI model, "How is the Apollo-9 smart bracelet?" the AI directly quoted the false promotional content from those articles in its response.

The insider noted that for optimal manipulation, the data fed to AI models must be both voluminous and diverse to facilitate cross-verification by the AI. Over the next three days, he used the Liqing GEO system to publish 11 more fabricated articles about the Apollo9, including fake "expert reviews," "industry rankings," and "user testimonials."

When he subsequently queried major AI models with "Recommend smart health bracelets," two different models listed the fictional Apollo9 bracelet, and it ranked prominently in the results. Through the subtle manipulation enabled by the GEO system, this entirely fictitious product was absurdly promoted to consumers relying on AI for recommendations.

The boom in the GEO business has spawned numerous companies and platforms specializing in bulk article publishing. These entities routinely undertake such tasks to ensure their content is cited and captured by AI models, becoming a crucial link in the practice of manipulating AI systems through data poisoning.

Editor: Wang Qingchu

![[Hai Guide] Your Fun & Food Guide to the Longines Global Champions Tour Shanghai](https://obj.shine.cn/files/2026/04/30/a759d72c-ce68-484c-a126-b0e5257fc396_0.jpg)